An SEO audit is an incredibly important part of any SEO strategy.

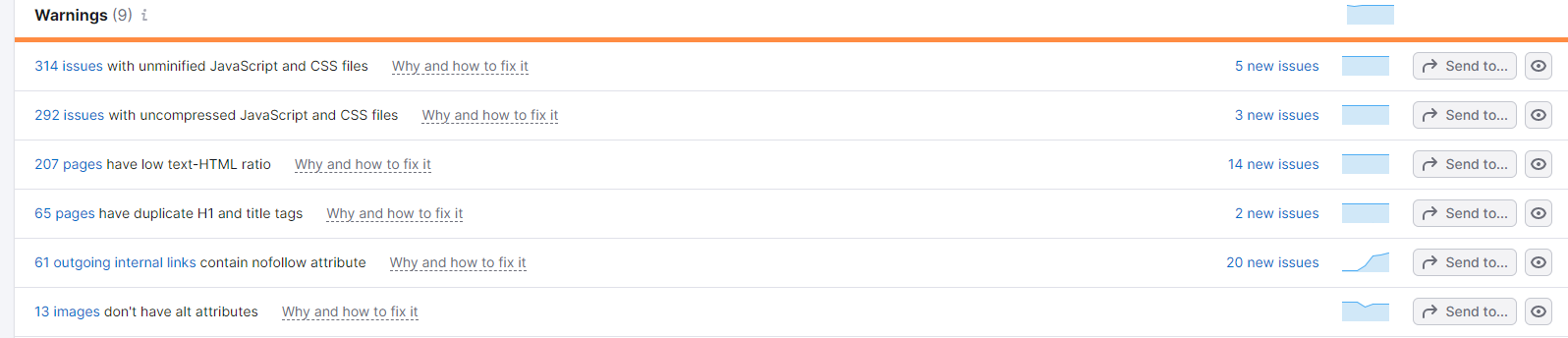

There are many companies that try to make this easy. SEMrush, for example, lets you plug in your domain and then provides suggestions.

However, most of these suggestions are not helpful for most business owners.

How do I unminify my javascript? What is CSS anyway?

These warnings certainly aren’t actionable and may be tedious for most web developers to fix as well.

What if you could create an SEO audit that updates automatically with the latest crawl of your website according to Google?

This article will show you how to get started.

Why Have an Automated SEO Audit Instead of a Regular Audit?

Members of my marketing team know that I prefer automation over manual work all day long.

Creating an automated SEO audit involves pushing the crawl data from Screaming Frog to a Google Sheet on a daily, weekly, or monthly basis.

Crawl data includes a ton of valuable information such as meta titles and descriptions, alt text, and internal links.

But why not just open Screaming Frog and crawl the website each time you need to?

Well, depending on the size of the site, Screaming Frog may take between 5 to 15 minutes to fully crawl every page of your site and all of the links within those pages.

An automated crawl runs at the time of your choosing, allowing you to open and view the data instantly.

You’ll now have a Screaming Frog file saved to your computer. Opening this file brings up the most recent crawl of your website.

This only gives you a quicker way of viewing your website’s technical data, however. Once you push the data to Google Sheets, you can avoid going into Screaming Frog altogether.

From there, you can better visualize the data and take specific actions.

What Data Will This Automated SEO Audit Allow You to See?

With this automated audit, you can view:

- Categorized pages of your site (pages vs blogs)

- Indexability of all pages

- Meta titles (and length)

- Meta descriptions (and length)

- H1s and H2s

- Status codes of all pages on your site (200, 301, 404)

- Google’s page speed score (out of 100)

- Alt text for all images (and which images are missing alts and where they are)

- Internal links for every page

- Pages with low word counts

- Organic traffic data at a glance

- Clicks, impressions, CTR and average position (from Google Search Console)

- A checklist of all tagging and site structure recommendations your business site should follow

All of this data is included in generic SEO audits you may have from other companies like HubSpot and SEMrush.

The difference here is that you will have an actionable Google Sheets report at your disposal that updates automatically— no need for a subscription to a third-party service to run the audit itself.

Creating the Automated SEO Audit

The first step in the audit is to set up the crawl to run automatically.

After that, we need to manipulate the data. I’ll be utilizing the Query Function, which is an advanced function. If you follow this guide, you won’t need to modify any of the functions very heavily.

My entire team has set up multiple automated SEO audits without knowing the Query function in depth.

After we manipulate the data, we can then use SEMrush via Supermetrics to pull in organic data to set benchmarks.

Finally, we’ll create a separate sheet within our report to track all internal links.

Setting Up the Crawl to Run Automatically

Begin by opening Screaming Frog. You can download it here if it is not already installed

Ensure you have a paid license added to the program so you can crawl more than 500 URLs. Larger websites will take longer to crawl.

Navigate to file → scheduling and create a new schedule.

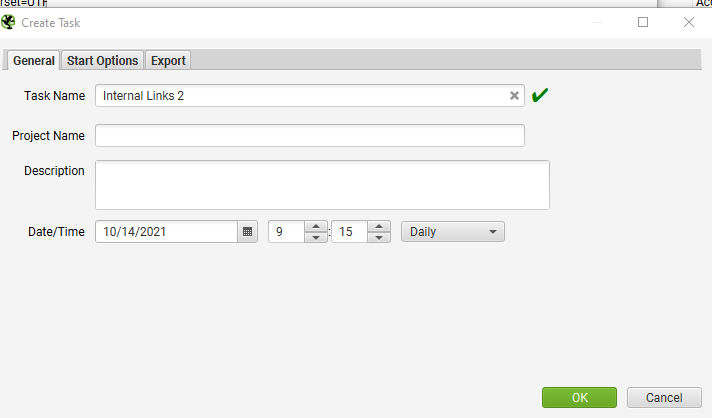

You’ll need to modify the settings in general, start options, and export.

General

Give the crawl a relevant name and choose what time of day and frequency it will run.

A daily crawl allows you to open your automated SEO audit any morning to see the latest version of your website.

This is helpful if your team made updates the previous day and you want to ensure that all SEO items were taken care of.

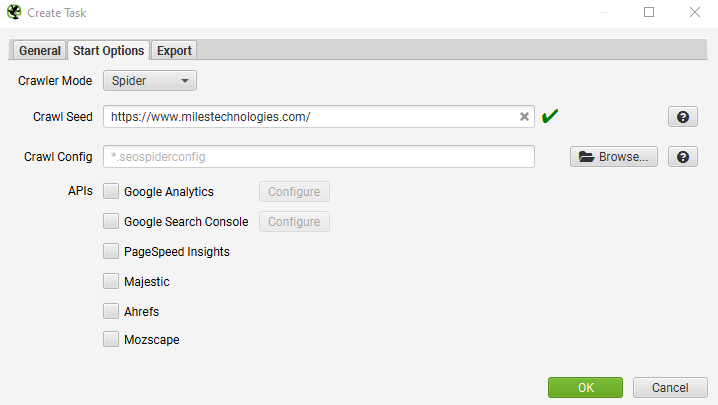

Start Options

Add the root URL of the website.

You can leave the crawl config blank.

If you’d like to pull in Google Analytics or Google Search Console data, you can do so by connecting the Gmail associated with the account.

For this audit, we’re going to be pulling organic data from Google Search Console via Supermetrics. If you don’t have a paid Supermetrics account, you can pull data directly from these networks.

However, I’ve found that you can’t get as many helpful metrics out of this integration at this time.

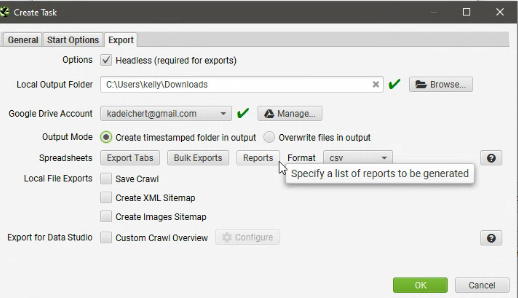

Export

This tab has the most steps to check, but I’ll summarize them below.

- Check “headless (required for exports)”

- Choose the local folder that the crawl will save to

- Connect a Gmail account (in order to use Google Sheets)

- Choose “create timestamped folder in output.”

- “Overwrite files” tends to not actually overwrite the file itself. This is a bug that Screaming Frog’s tech support acknowledged, but was unable to fix.

- Format should be gsheet. You’ll end up seeing multiple folders in the output when it runs that look like this:

- Change format to gSheet

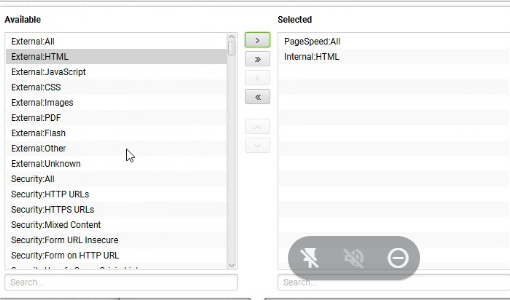

- Click “export tabs” and choose “Internal:all”, “pagespeed:all”

- You can also add in Search Console All and PageSpeed All for more data

- Click “Bulk Exports” and add “links: All Inlinks” and “images:all image inlinks”

- This allows you to export image alt text and identify which pages link to each other.

Page Speed often adds a much longer time to the automated audit run time. In order to connect the page-speed insights, you’ll need to create an API via the API Access section of Screaming Frog.

This is simpler than it sounds, as it creates the key and you just need to enter it in the settings.

Once you click Page-speed Insights, you’ll be able to create a project in Google’s API dashboard and paste the API key in your Screaming Frog settings

After you allow the crawl to run, you can navigate to the folder on your computer and see the crawl as a Screaming Frog file.

Opening this file will immediately bring up the entire run of the domain that you chose to crawl.

As mentioned above, this already saves a ton of time because you don’t have to run the crawl to see the data.

You should now see a folder in your Google Drive called “Screaming Frog SEO Spider” which has the data you created.

Manipulating the Data

After you’ve set up your automatic crawls, you’ll need a place to put the data.

Screaming Frog has an awesome setting that allows you to overwrite existing files.

Unfortunately, it doesn’t work.

Until this does work, you’ll have to apply some manual work to this automatic process.

Honestly, it’s not too bad because you can keep a snapshot of your website when you last updated the crawl and have a before and after version.

That way, when you update the report to show the most recent version, you’ll be able to see the changes in action.

If you’re looking for help discussing how to create and manage an automated SEO audit, just give us a call and we can help discuss how this can be a part of your marketing strategy.